A research duo from the University of Washington has developed a computer interface that brings telepathy one step closer to becoming reality.

• General explanation of how our brains communicate and how the researchers are applying this to their device.

• Description of the experiment conducted for the study.

• How the technology works.

• Results of the experiment.

• Possible explanations for the margin of error.

Andrea Stocco and Chantel Prat have invented a brain to brain computer interface that allows people to communicate without saying a single word. What’s more, the communication also happens remotely, over the Internet.

Stocco, assistant professor of psychology at the University of Washington, gave a statement saying that “Evolution has spent a colossal amount of time to find ways for us and other animals to take information out of our brains and communicate it to other animals in the forms of behavior, speech and so on”.

He went on to explain that this process requires a translation. People can only share a part of what their brains process. So what he and Prat are doing is reversing the process step by step by “opening up this box and taking signals from the brain” and putting them inside another person’s brain, all using minimal translation.

Basically, the thoughts a person has are translate into flashes of light that are transmitted into the other person’s brain.

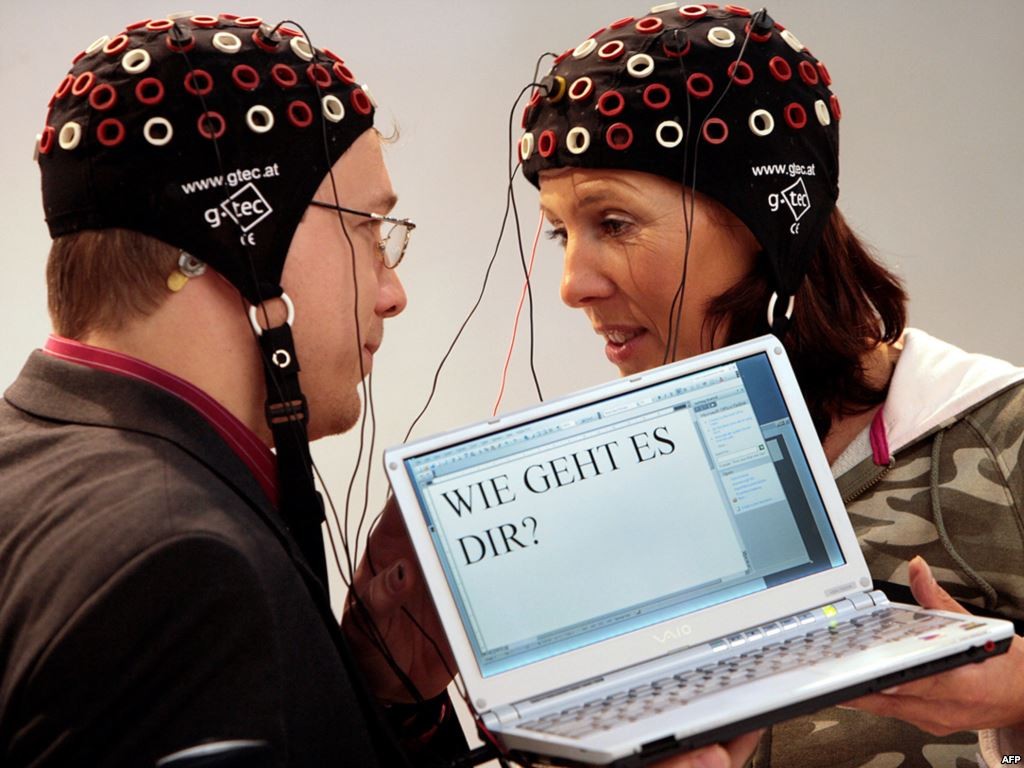

For their project, the research duo paired subjects together, then split them up, placing them in separate rooms sitting a mile away from each other. The respondent had to wear an electrode cap that the researchers connected to an electroencephalography machine, a device known for recording electrical brain activity.

The inquirer had to sit across from a magnetic coil that was capable of stimulating his or her visual cortex and cause them to see a phosphene, a flash of light that can take the shape of a thin line, a wave, or a blob.

Stocco and Prat showed the respondent an object on the computer screen and asked them to focus on it, whereas the inquirer was shown a list of potential objects that the respondent might be focusing on, as well as a list of questions that related to the object and the inquirer had to answer with “yes” or “no”. Examples of questions include “Is it sweet?” and “Is it a liquid?”.

All the inquirer had to do to send a question to the respondent was click on it. And the respondent had to simply look at his or her screen and focus on the word “yes” or the word “no” in order to send the answer. Each of the answers was associated to a phosphene that flashed at a different frequency, and the electroencephalography reading linked to each answer would then send a signal to the inquirer’s coil.

It’s worth mentioning that only affirmative answers were able to generate responses that were large enough to make the inquirer see the phosphene associated with the answer. So from the respondent’s point of view, seeing a flash o light meant the answer was “yes”, and not seeing anything meant the answer was “no”.

The results showed that the subjects were able to guess shat the correct object was 72 percent (72%) of the time.

Stocco and Prat say that subjects may have guessed wrong 28 percent (28%) of the time for a number of reasons – the respondent having no idea what the right answer was, the respondent focusing on both answers, the inquirer struggling to recognize the phosphene.

The findings were published earlier this week, on Wednesday (September 23, 2015), in the journal PLOS One.

Image Source: pixabay.com

Leave a Reply

You must be logged in to post a comment.